Demartek Evaluation: Marvell FastLinQ 41000 Series 10GbE Performance, iSCSI Offload Competitive Evaluation and Storage Spaces Direct Use Cases

July 2019

July 2019

Non-Volatile Memory Express (NVMe) and Storage Class Memory (SCM) are offered in current generation servers with Intel Xeon Scalable Processors. The resulting server storage performance gains are driving an increase in network bandwidth: Virtual Machines (VMs) and containers are more densely deployed on servers, the internet Small Computer System Interface (iSCSI) is being used for high-bandwidth storage solutions, and Hyper Converged Infrastructure (HCI) needs extensive bandwidth for inter-node communications. A 10GbE network has become necessary to support this infrastructure.

It is also important to consider additional Network Interface Card (NIC) features that enhance overall performance that may be applicable to a deployment like iSCSI hardware offload or Remote Direct Memory Access (RDMA). Marvell FastLinQ 41000 Series adapters are equipped with one of the most extensive collections of these Ethernet features on the market.

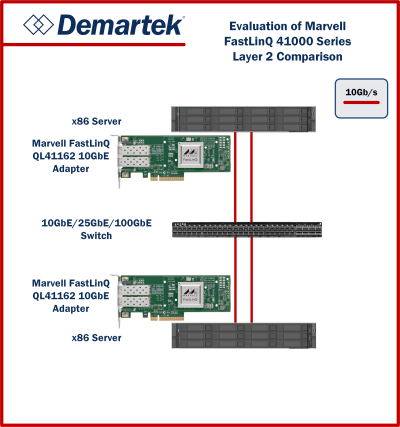

Marvell commissioned Demartek to evaluate the benefits of the Marvell FastLinQ 41000 Series when used with latest generation servers. We tested for Layer 2 performance, compared the iSCSI hardware initiator offload performance to that of software initiator on a leading competitor, and evaluated Marvell FastLinQ 41000 Series use in a hyper-converged Storage Spaces Direct (S2D) cluster with SCM and NVMe storage.

Related report: Marvell FastLinQ 41000 Series 25GbE Performance

Report: Marvell FastLinQ 41000 Series 10GbE Performance, iSCSI Offload Competitive Evaluation and Storage Spaces Direct Use Cases (PDF, 3 MB) |

We are pleased to announce that Principled Technologies has acquired Demartek.

We are pleased to announce that Principled Technologies has acquired Demartek.